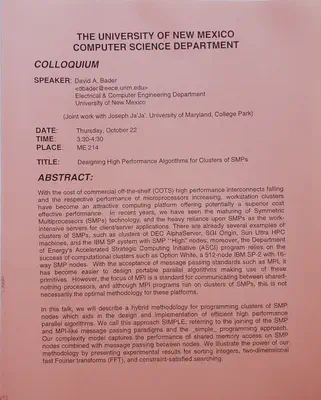

UNM Invited Talk: Designing High Performance Algorithms for Clusters of SMPs

Abstract

With the cost of commercial off-the-shelf (COTS) high performance interconnects falling and the respective performance of microprocessors increasing, workstation clusters have become an attractive computing platform offering potentially a superior cost effective performance. In recent years, we have seen the maturing of Symmetric Multiprocessors (SMPs) technology, and the heavy reliance upon SMPs as the work-intensive servers for client/server applications. There are already several examples of clusters of SMPs, such as clusters of DEC AlphaServer, SGI Origin, Sun Ultra HPC machines, and the IBM SP system with SMP ‘High’ nodes; moreover, the Department of Energy’s Accelerated Strategic Computing Initiative (ASCI) program relies on the success of computational clusters such as Option White, a 512-node IBM SP-2 with 16-way SMP nodes. With the acceptance of message passing standards such as MPI, it has become easier to design portable parallel algorithms making use of these primitives. However, the focus of MPI is a standard for communicating between shared-nothing processors, and although MPI programs run on clusters of SMPs, this is not necessarily the optimal methodology for these platforms. In this talk, we will describe a hybrid methodology for programming clusters of SMP nodes which aids in the design and implementation of efficient high performance parallel algorithms. We call this approach SIMPLE, referring to the joining of SMP and MPI-like message passing paradisms and the simple programming approach. Our complexity model captures the performance of shared memory access on SMP nodes combined with message passing between the nodes. We illustrate the power of our methodology by presenting experimental results for sorting integers, two-dimensional fast Fourier transforms (FFT) and constraint-satisfied searching.