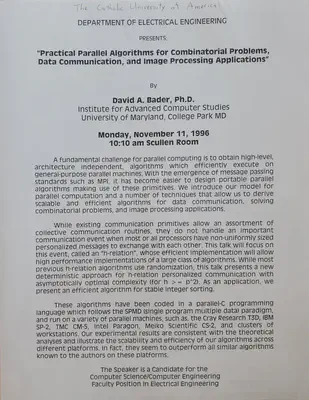

Catholic University of America (CUA) Talk: Practical Parallel Algorithms for Combiantorial Problems, Data Communication, and Image Processing Applications

Abstract

A fundamental challenge for parallel computing is to obtain high-level, architecture independet, algorithms which efficiently execute on general-purpose parallel machines. With the emergence of message passing standards such as MPI, it has become easier to design portable parallel algorithms making use of these primitives. We introduce our model for parallel computation and a number of techniques that allow us to derive scalable and efficient algorithms for data communication, solving combinatorial problems, and image processing applications. While existing communication primitives allow an assortment of collective communication routines, they do not handle an important communication event when most or all processors have non-uniformly sized personalized messages to exchange with each other. This talk will focus on this event, called an ‘h-relation’, whose efficient implementation will allow high performance implementations of a large class of algorithms. While most previous h-relation algorithms use randomization, this talk presents a new deterministic approach for h-relation personalized communciation with asymptotically optimal complexity (for h >= p^2). As an application, we present an efficient algorithm for stable integer sorting. These algorithms have been coded in a parallel-C programming language which follows the SPMD (single program mulitple data) paradigm, and run on a variety of parallel machines, such as, the Cray Research T3D, IBM SP-2, TMC CM-5, Intel Paragon, Meiko Scientific CS-2, and clusters of workstations. Our experimental results are consistent with the theoretical analyses and illustrate the scalability and efficiency of our algorithms across different platforms. In fact, they seem to outperform all similar algorithms known to the authors on these platforms.